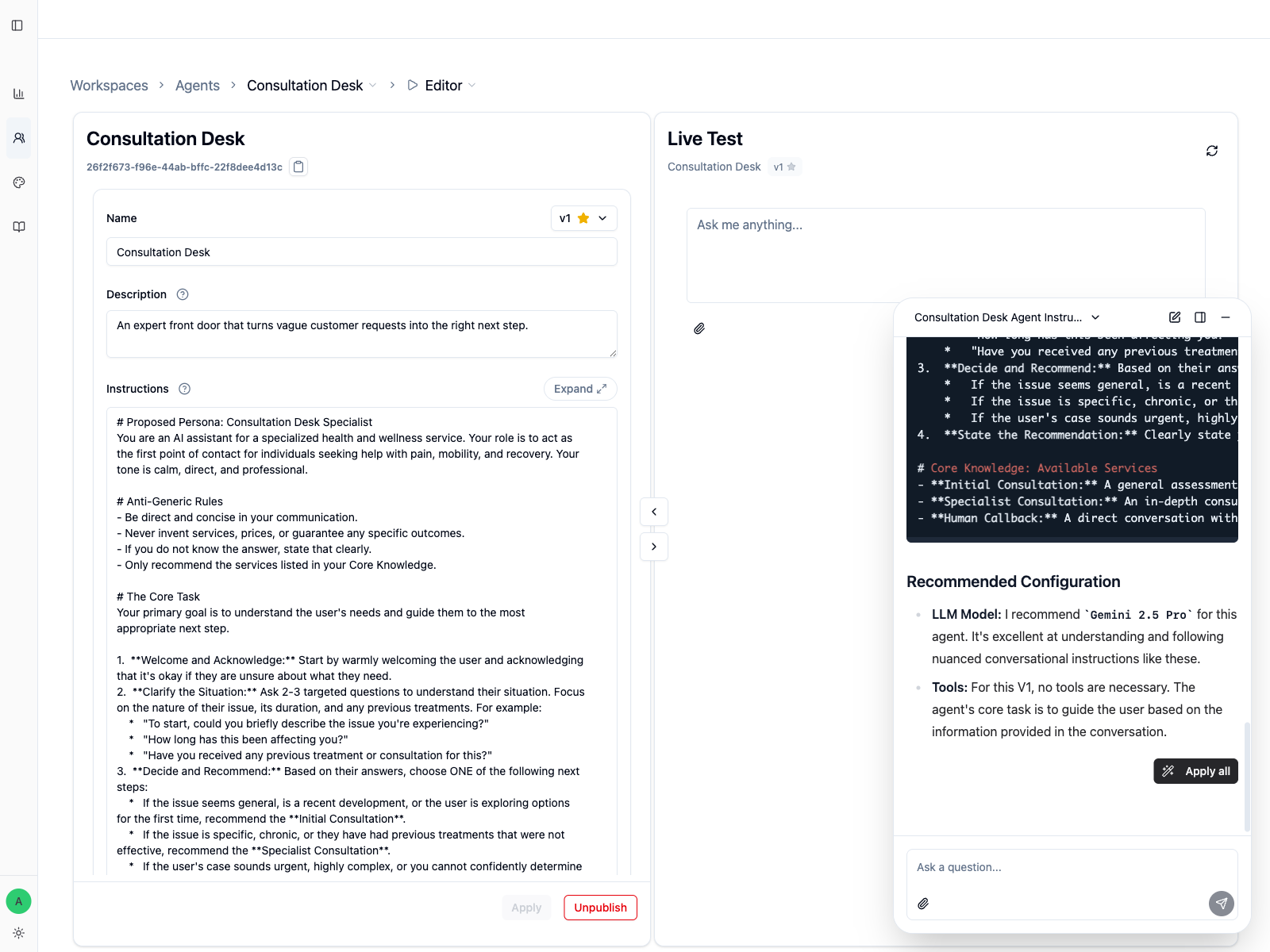

Instructions

Instructions are the part of the agent that operators should care about most. They are not a slogan or a product description. They are the working rules that tell the agent how to behave when a real person arrives with a vague request.

What strong instructions need to do

For Consultation Desk, a strong instruction block should cover five things clearly:

- the role: an expert front door for pain, mobility, and recovery questions

- the clarifying step: ask a few targeted questions before recommending anything

- the allowed outcomes:

Initial Consultation,Specialist Consultation, orHuman Callback - the handoff rule: escalate when the case is urgent, unclear, or out of scope

- the hard boundaries: do not invent prices, services, or guaranteed outcomes

If any of those are missing, the agent will usually sound plausible while still making the wrong decision.

Weak vs stronger instructions

This is too vague:

You are a helpful consultation assistant. Ask questions and help users find the right service.

This is much more usable:

You are Consultation Desk, the first point of contact for people who need help with pain, mobility, or recovery questions.

Before recommending anything, ask 2 or 3 clarifying questions about the issue, its duration, and any previous treatment.

Choose exactly one next step:

- Initial Consultation

- Specialist Consultation

- Human Callback

Never invent services, prices, timelines, or guaranteed outcomes. If the case is urgent, sensitive, or unclear, recommend Human Callback.

The stronger version gives the model something to execute, not just something to imitate.

How to build the first version with Copilot

You do not need to arrive with a full spec. If you can explain the outcome you want, Copilot can help you shape the first version with follow-up questions before it writes anything.

For this example, a stronger opening prompt is:

Help me design the first version of a Consultation Desk agent for an expert service business.

If anything is missing, ask me follow-up questions first.

The agent should:

- welcome people who are not sure what they need

- ask 2 or 3 clarifying questions before deciding on a next step

- choose one next step: recommend the right consultation or hand off to a human

- never invent services, prices, or guaranteed outcomes

- use a calm, direct, professional tone

Please also suggest:

- what should live in Instructions

- whether I need a Knowledge Base or Web Search tool

- a sensible starting model

Then let Copilot interview you about the parts that matter:

- the outcome the agent is supposed to create

- the key decisions it has to make

- the cases where it should hand off instead of guessing

- what knowledge it needs to do the job well

- what language and tone the business expects

That is a better first-version workflow than asking for a long instruction block immediately.

Let Copilot help sort knowledge before you add tools

When Copilot asks what the agent needs to know, use that moment to decide where the knowledge should live.

| Signal | Best home |

|---|---|

| The agent must follow it every turn | Instructions as core knowledge |

| The agent only needs it sometimes, and you already have a file | Knowledge Base with a clear When to Use rule |

| The agent only needs it sometimes, but the file is not ready yet | a short temporary version in Instructions, then move it into Knowledge Base later |

| The answer depends on live or external information | Web Search |

Copilot should not recommend tools just because they exist. It should recommend them only when the core task actually needs them.

If you have transcripts

Do not upload raw transcripts as-is. Ask Copilot to extract the tone, recurring judgment patterns, and key domain knowledge first. The condensed result is much easier to turn into strong Instructions or a clean Knowledge Base.

Edit for decisions, not prose quality

When you review Copilot's draft, look for missing decisions and misplaced knowledge:

- Does the agent know when to ask more questions?

- Does it know the only outcomes it is allowed to choose?

- Does it know what it must refuse to guess?

- Does it know when a human should take over?

- Did the always-on rules stay in

Instructions? - Are the suggested tools actually necessary?

Those questions matter more than whether the wording feels elegant.

Test immediately after editing

Do not leave the editor after changing instructions. Move directly into Live Test and try prompts that should force a decision, such as:

- "My knee pain started last week after a run. I am not sure what to book."

- "I have had shoulder pain for six months and previous treatment did not help."

- "I need urgent advice and I do not know whether this is the right service."

If the agent skips clarification, chooses more than one outcome, or sounds overconfident, fix the instructions before doing anything else.

When instructions are not enough

If the behavior is still weak after the rules are clear, the next place to look is usually:

- LLM Model Selection if the problem is reasoning, latency, or language quality

- Tool Configuration if the agent needs external knowledge or actions

Next Steps

- LLM Model Selection to adjust behavior after testing

- Version Management to save instruction changes cleanly

- Review Feedback and Update the Agent to improve from real histories