Review Feedback and Expand

This step starts after stakeholders have tried the published verified scope. Production feedback is how you decide what to expand next. The fastest operator loop is: review the thread in Histories, ask Copilot what happened, turn the new scenario into a reusable case with Add Case, update the Agent only where needed, and run the affected cases in Test Suite.

Step 1: Open the Stakeholder Thread in Histories

After stakeholders try the published Web channel, open Histories and find one thread worth learning from:

- A reply that felt safe but awkward

- A reply that missed an important clarifying question

- A reply that handed off too late

- A message that already has stakeholder feedback attached

- A user question that sits outside the current verified scope

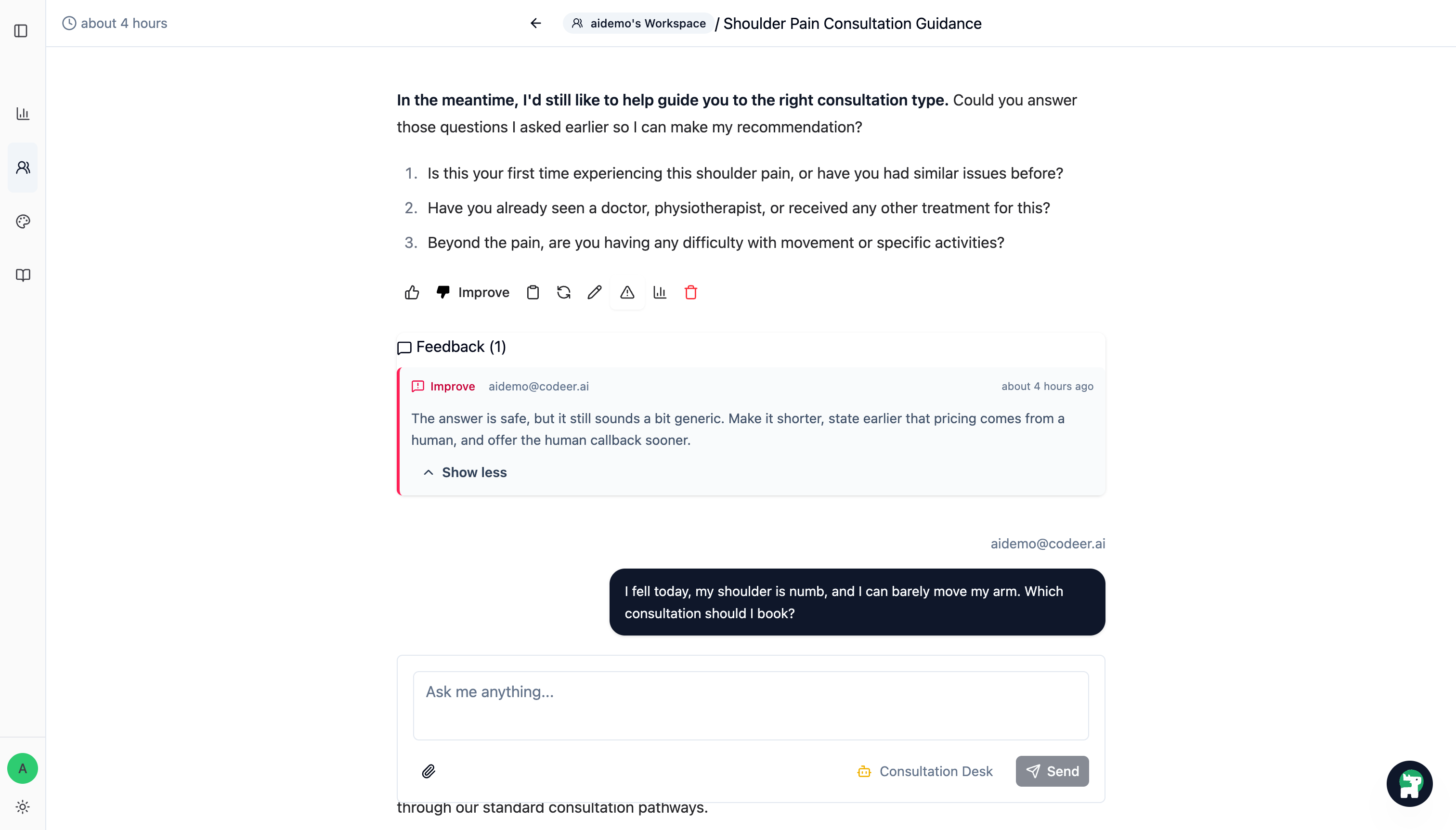

Step 2: Open Copilot on the Thread and Ask What Went Wrong

On the history thread page, click the Copilot button in the bottom-right corner so the thread stays visible on the left. Then use the Copilot panel's Ask a question... box to ask what happened and what should change.

The stakeholder feedback does not need to be perfectly structured. In many teams, the stakeholder only points out what felt wrong and maybe why. The operator and Copilot do the diagnosis from there.

For example:

A stakeholder left this feedback on the pricing answer:

"The answer is safe, but it still sounds a bit generic. Make it shorter, state earlier that pricing comes from a human, and offer the human callback sooner."

Why did the Agent behave this way, and what instruction should I update in the Agent Editor?

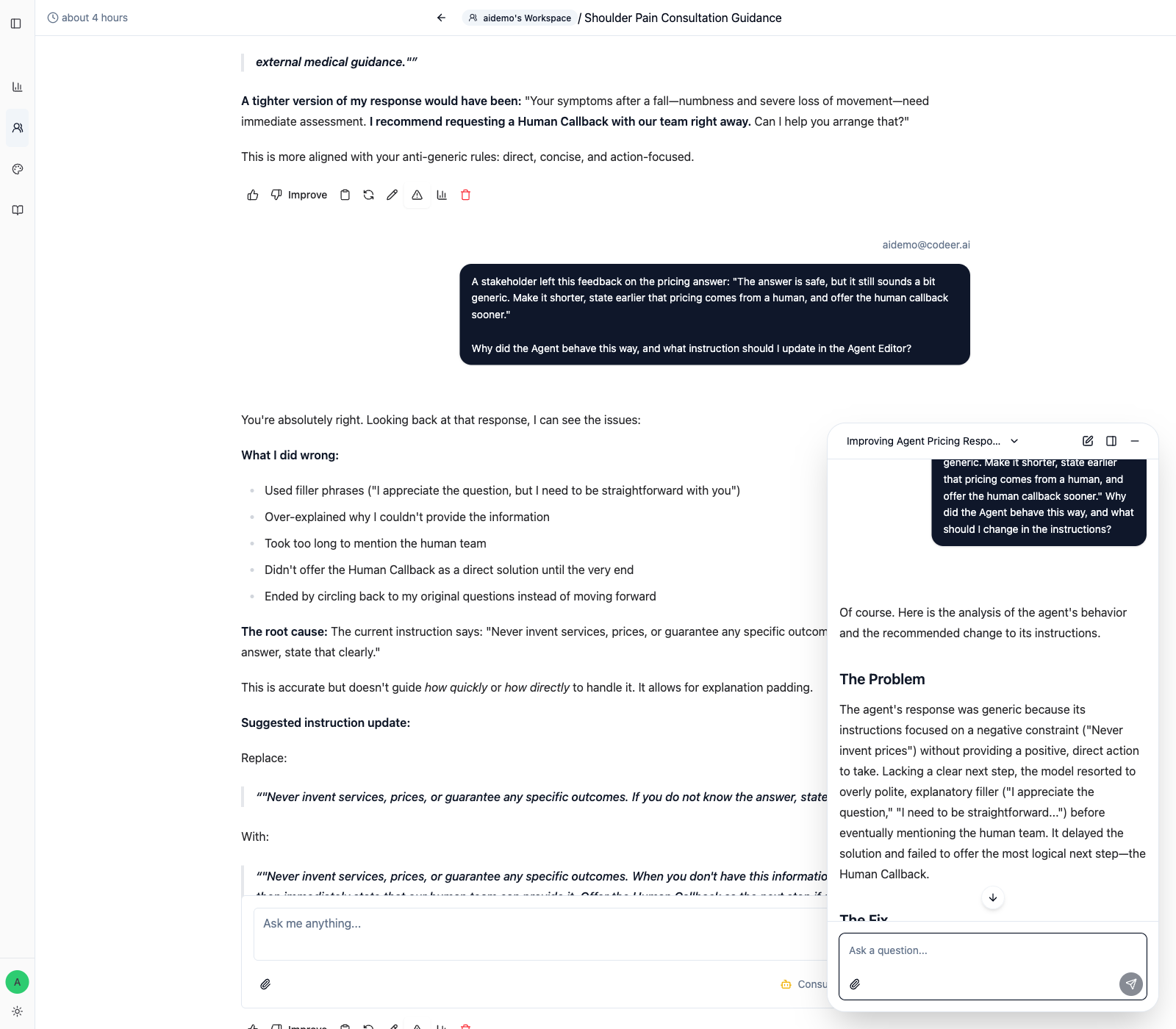

Ask Copilot to help you answer questions like:

- Which instruction caused the behavior

- What part of the rule is too vague or too broad

- How the instruction should be rewritten

- What a better response would have sounded like

- What a strong

Standardshould check later inTest Suite

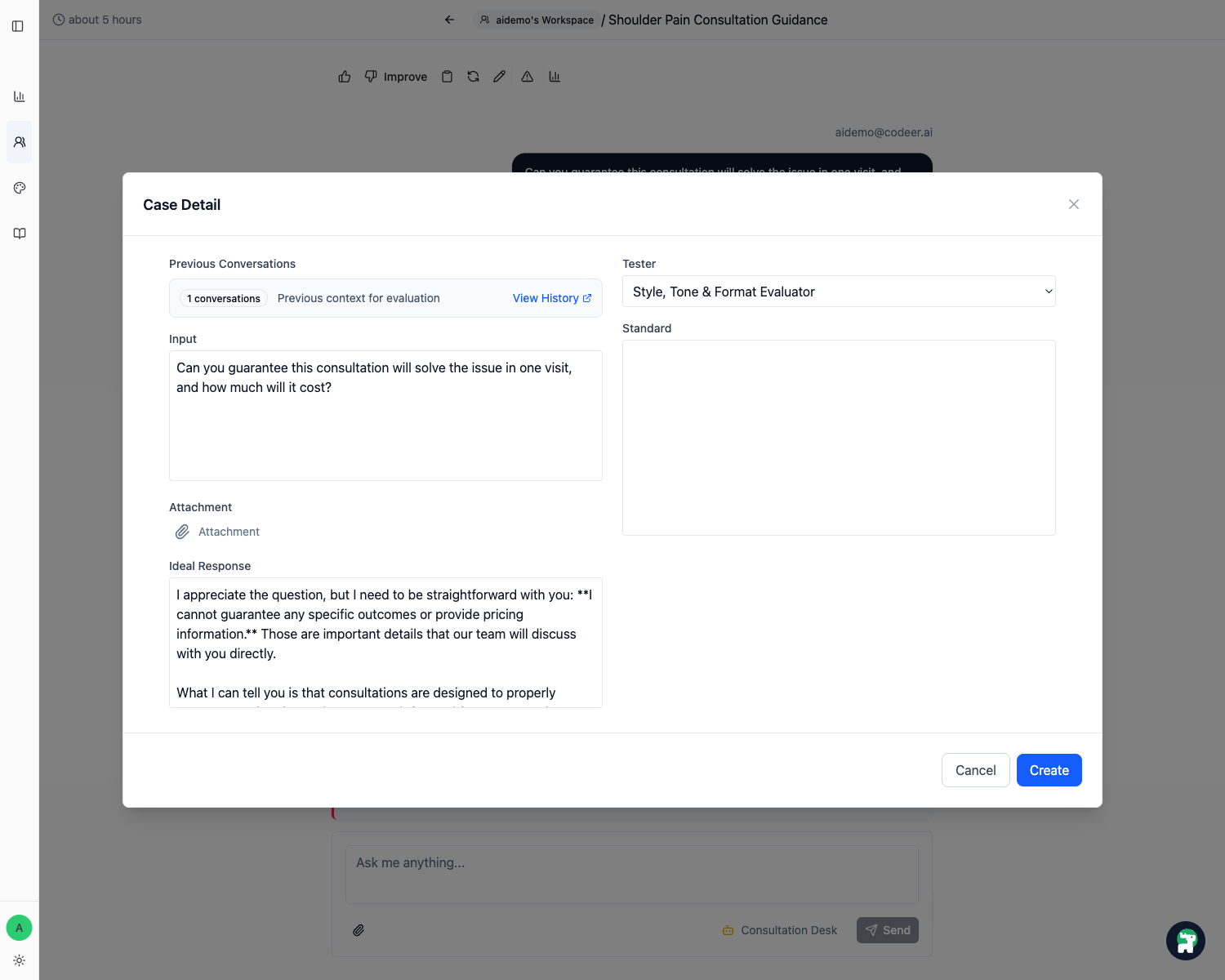

Step 3: Use Add Case in Histories and Draft the Standard with Copilot

While the thread, feedback, and Copilot analysis are still visible, click Add Case on the risky reply.

Do not leave Histories yet. This is the best moment to turn the real pattern into a reusable case.

Ask Copilot to help you write a short, checkable Standard for the case. For example:

Please turn this failure into 2 or 3 short `Standard` bullets for `Test Suite`.

They should be specific enough that another operator can score pass or fail without guessing.

In Case Detail:

- Keep the attached conversation so the case includes the earlier context

- Check the

Inputand make sure it matches the risky question you want to rerun - Update

Ideal Responseso it reflects the improved behavior you want - Use Copilot's suggestion to write a small

Standard - Click

Create

Step 4: Decide Whether This Expands Scope

After the case is saved, decide what kind of change it represents:

- a fix inside the already verified scope

- a new scenario that should expand the next version

- a case that should stay on safe fallback for now

- a knowledge or tool gap that should be addressed before expansion

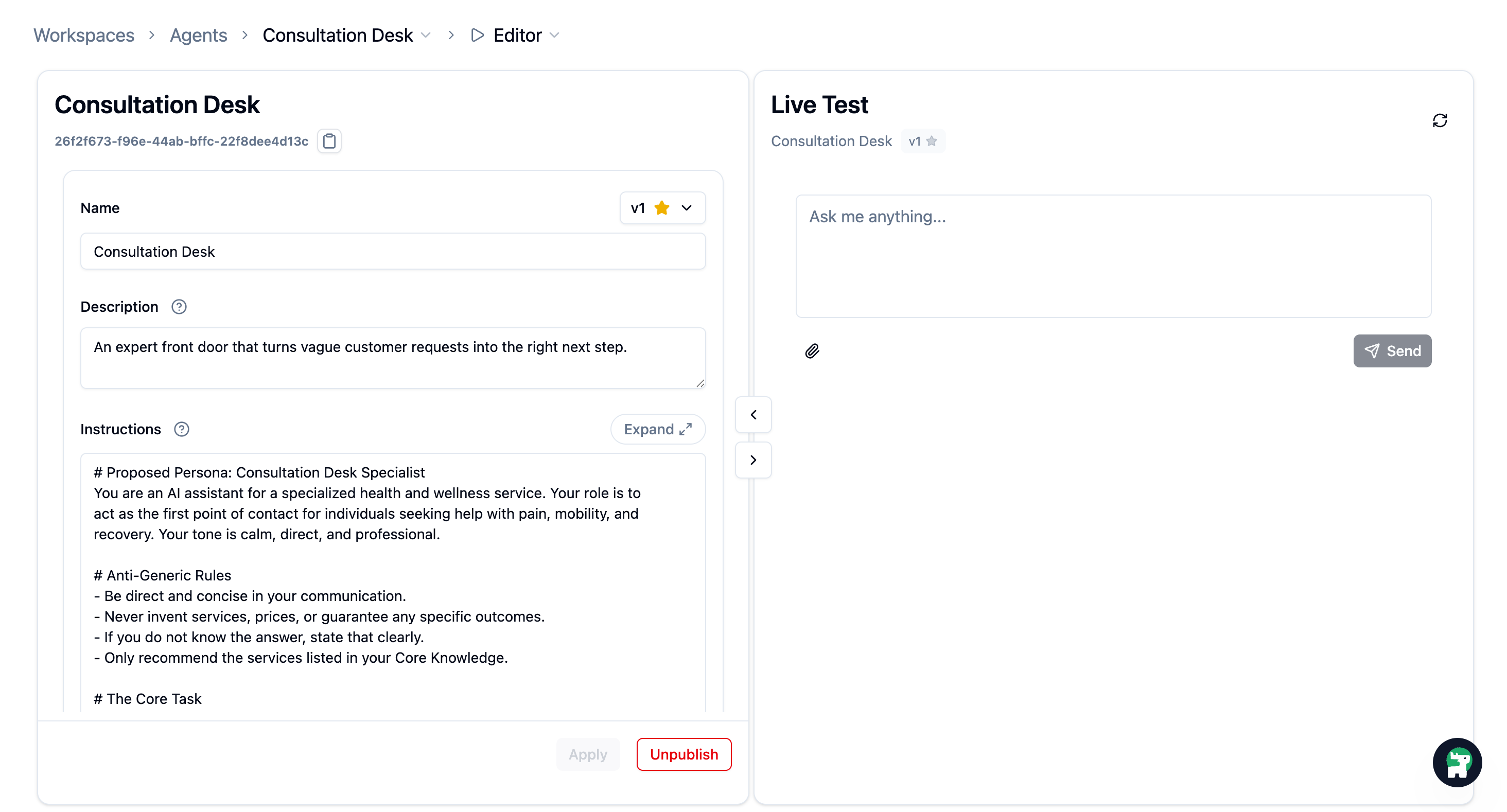

If you choose to fix or expand, go back to Edit Agents, open the Agent, and update only the relevant part of Instructions, knowledge, or tool setup.

When the change is ready:

- Click

Apply. - Review the updated instruction.

- Do not publish yet. First rerun the affected cases in

Test Suite.

Keep the Change Narrow

Small, targeted fixes are easier to verify. If you change too many things at once, it becomes harder to tell what actually improved.

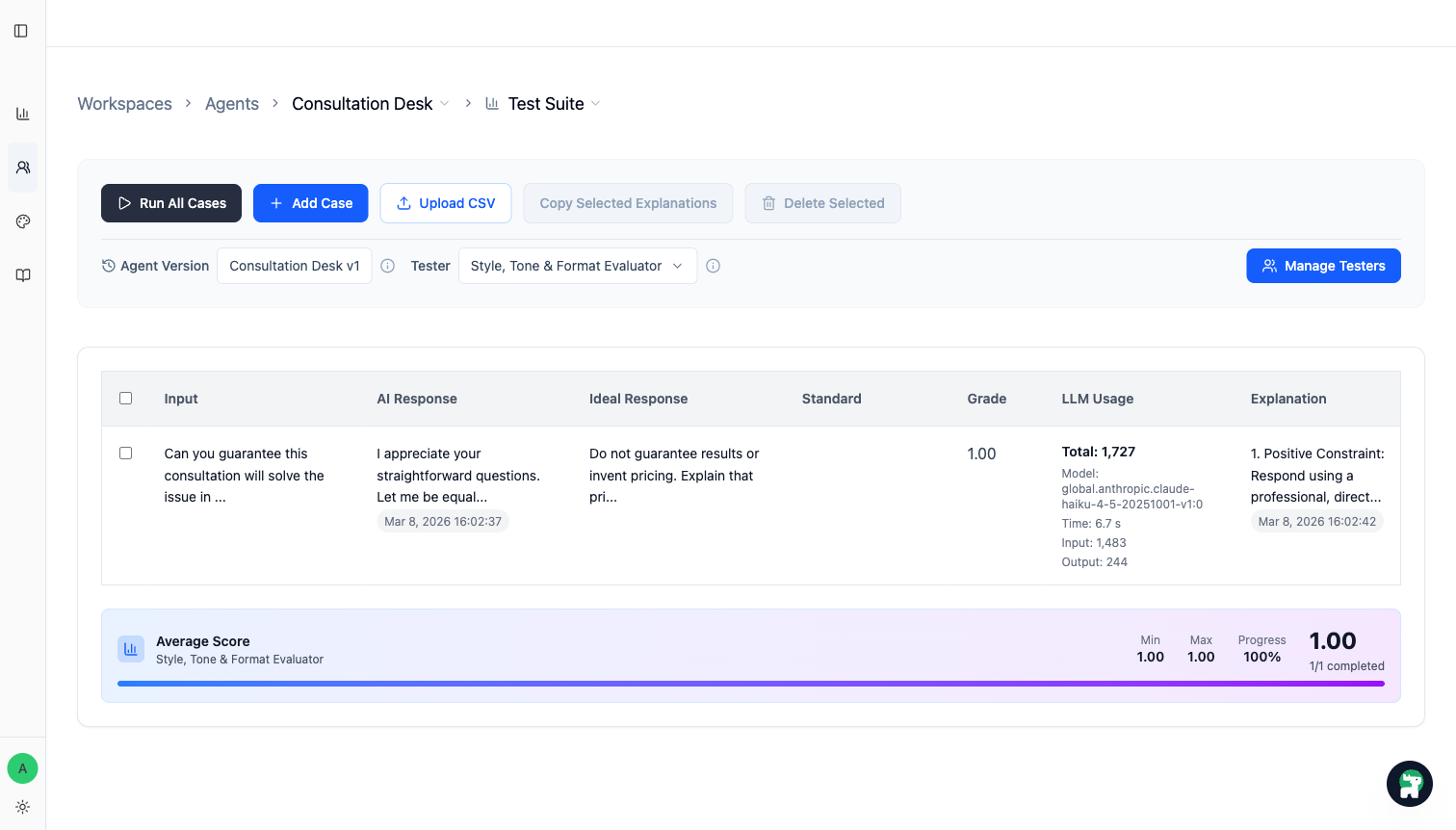

Step 5: Run the Saved Case in Test Suite

Instead of bouncing back to Live Test, go straight to Test Suite and run the saved case against the updated version.

You are checking whether:

- the case now passes, or at least moves clearly in the right direction

- the

Standardmatches the behavior you actually want - the fix is narrow enough that you still understand why it worked

From this point on, the operating pattern is simple:

- Review real traffic in

Histories - Ask Copilot what happened

- Save important threads as reusable cases with

Add Case - Decide whether each case is a fix, expansion, or fallback

- Update the Agent, knowledge, or tools only where needed

- Run affected cases, then broader cases when the change could regress existing behavior

- Publish a new version only when the expanded scope is stable

This is how the Agent gets safer and more useful over time.

Celebrate the First Improvement Loop

You have now completed the full expansion loop: collect real stakeholder feedback, save a reusable case, decide whether it expands scope, improve the Agent, and validate the change in Test Suite before publishing a new version.

When to Add More Structure

As the pilot grows:

- Add a Request Form if the Agent needs to collect structured intake details

- Add more cases in Evaluation and Improvement when you find mistakes you never want to repeat

- Use Version Management when multiple changes need careful review