LLM Model Selection

Model choice matters, but it usually matters after the workflow is clear. For most operators, the right starting move is to keep the default model, test the agent, and switch only when the failures point to a specific problem.

Start with the default unless testing proves otherwise

For Consultation Desk, the first version should usually stay on the workspace's default or balanced model. That keeps the early work focused on behavior:

- Is the agent asking clarifying questions?

- Is it choosing only one next step?

- Is it respecting the boundaries in the instructions?

If those basics are wrong, changing the model is often the second fix, not the first one.

Change models for a reason you can name

Switch models when repeated testing shows one of these problems:

| Observed problem | What to try |

|---|---|

| The agent is too slow for a simple front-door conversation | Try a faster model |

| The agent misses nuance or makes weak routing decisions | Try a stronger reasoning model |

| The agent sounds unnatural in the target language | Try a model with better language quality for that use case |

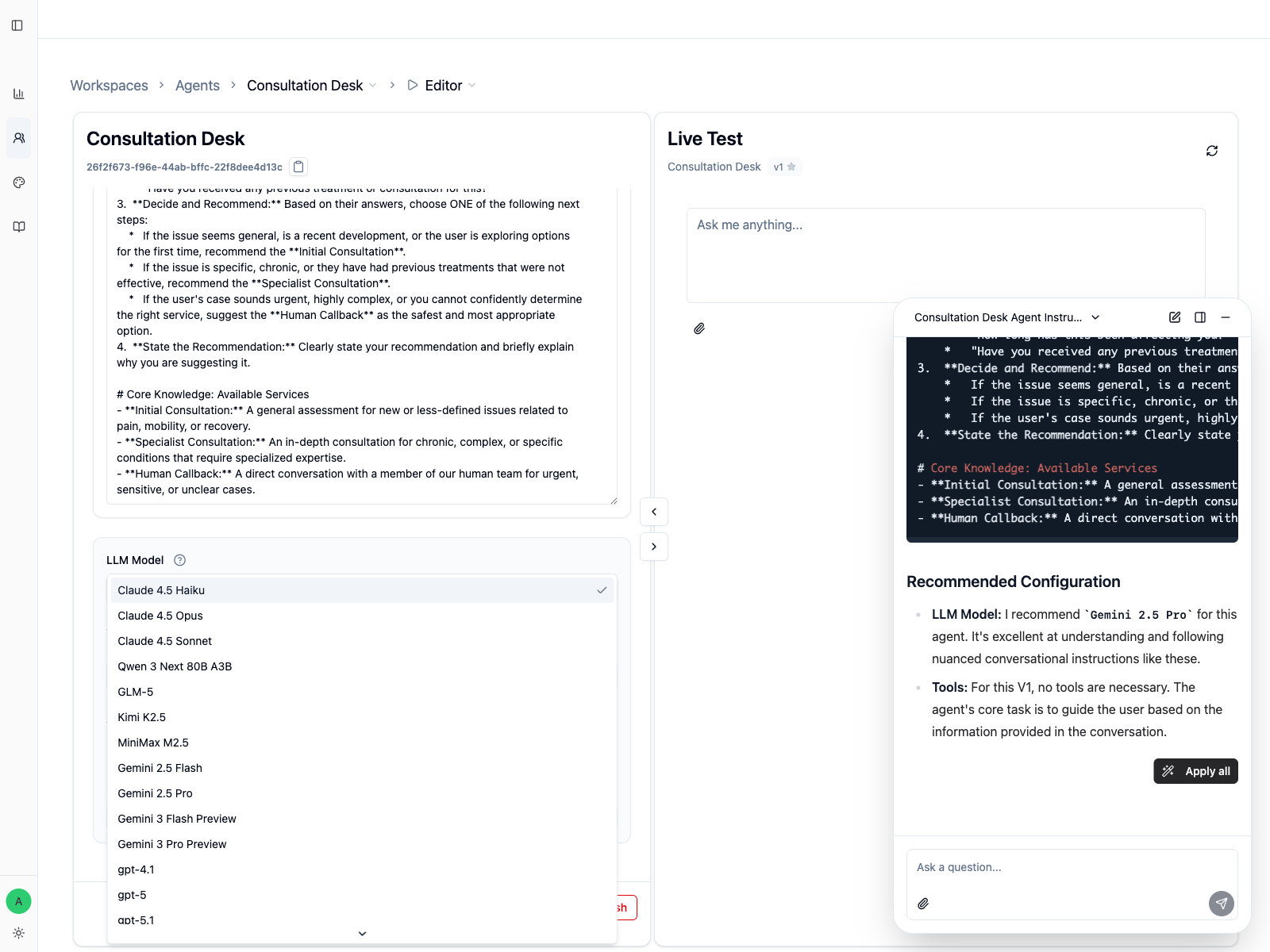

Available models depend on your workspace, so your dropdown may not match this screenshot exactly.

Compare models safely

When you compare models, keep the test controlled:

- Use the same 3 to 5 prompts each time.

- Change only the model, not the instructions.

- Record what improved and what got worse.

- Save the result with

Applyand a clear note.

That is the only way to tell whether the model actually solved the problem.

A practical rule for Consultation Desk

For a routing agent like Consultation Desk, switch models only if you see one of these concrete failures:

- it cannot follow the clarification flow consistently

- it gives weak recommendations for ambiguous cases

- it struggles to stay concise and professional in the target language

If the agent already makes the right decision with the default model, do not switch just because a stronger model exists.

When a model becomes unavailable

If a previously used model no longer appears in the dropdown, choose the closest available replacement and rerun your core tests before publishing again.

Treat Copilot's model suggestion as a starting point

When you build a first version, Copilot may recommend a model based on whether the agent needs tools, stronger reasoning, faster replies, or better tone. That recommendation is useful, but it is still a starting hypothesis. Validate it with the same test prompts before you publish.

Next Steps

- Version Management to save model changes cleanly

- Agent Editor to keep testing in

Live Test