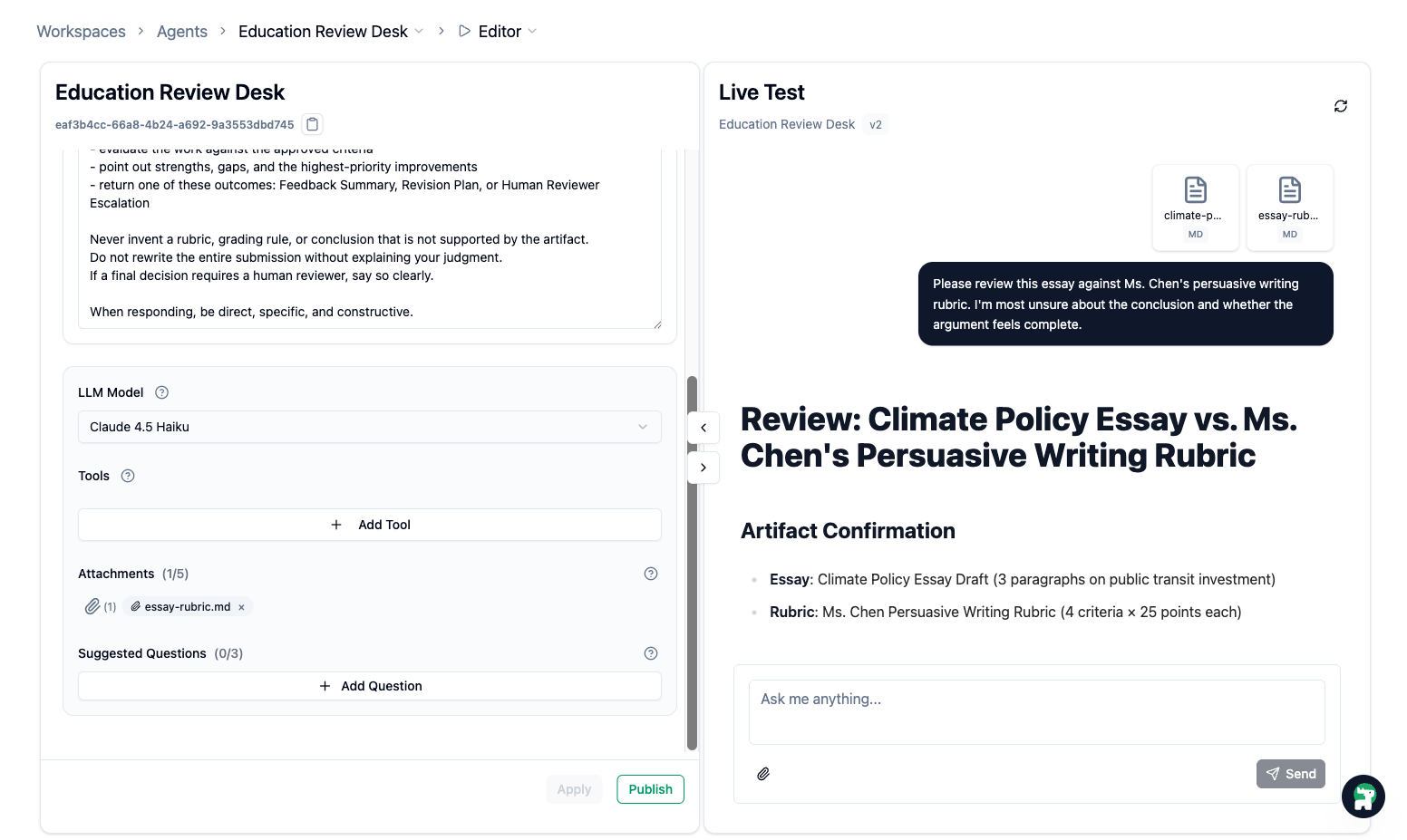

Education Review Desk

Education Review Desk is a strong non-intake example. The user already brings an artifact, and the agent's job is to review it against an approved rubric or standard, then return useful feedback plus a revision plan.

This is a simple but grounded review setup: keep the approved rubric in Attachments, upload the draft in Live Test, and let the agent return feedback plus a revision plan.

When This Pattern Fits

- The user uploads or pastes a draft, case write-up, or assignment.

- The team already has an approved rubric or review standard.

- The output should be feedback, revision guidance, or escalation to a human reviewer.

What the Agent Does

- Confirms what artifact it is reviewing and which rubric applies.

- Evaluates the work against the approved criteria.

- Points out strengths, gaps, and the highest-priority improvements.

- Returns a revision plan or escalates to a human reviewer when the case is outside scope.

Allowed Outcomes

Feedback SummaryRevision PlanHuman Reviewer Escalation

What It Must Not Do

- Invent a rubric or grading standard that was not approved.

- Claim the work contains issues it cannot actually support from the artifact.

- Rewrite the whole artifact without explaining the judgment.

- Present a final academic or policy decision if a human reviewer is required.

Suggested Tools and Data

- Attachments for a small rubric, checklist, or exemplar that should stay available every turn

- Knowledge Base for larger rubric libraries, policy notes, or curriculum guidance

- Request Form only if a later workflow needs structured follow-up from the student or reviewer

- HTTP Request later, if the review result should be sent to an LMS or internal system

Example Conversation

User: Can you review my climate policy essay? I'm not sure my conclusion is strong enough.

Agent: Yes. I'll review it against Ms. Chen's persuasive writing rubric. I'll focus on thesis clarity, evidence quality, counterarguments, and conclusion impact.

User: That's the right rubric.

Agent: Your evidence section is strong and well-supported, but the conclusion mostly repeats earlier points. The biggest improvement is to answer the "so what?" more directly. I've outlined a short revision plan: 1) restate the claim in one sentence, 2) name the real-world consequence, and 3) close with the policy action you want the reader to support.

Why This Pattern Matters

- It shows Codeer as expert judgment, not just first-touch intake.

- It works well for education, QA, compliance review, and internal expert feedback loops.

- It makes approved standards and bounded judgment more visible to the reader.